Popup campaign ROI: real data from 5 ecommerce brands

Read summarized version with

Key takeaways:

Your popup tool dashboard shows attributed revenue: purchases from people who clicked a campaign. That's not the same as revenue your campaigns actually made

The only way to prove your popups drive real sales is to run a control group A/B test, where some visitors see nothing and you compare what both groups spend

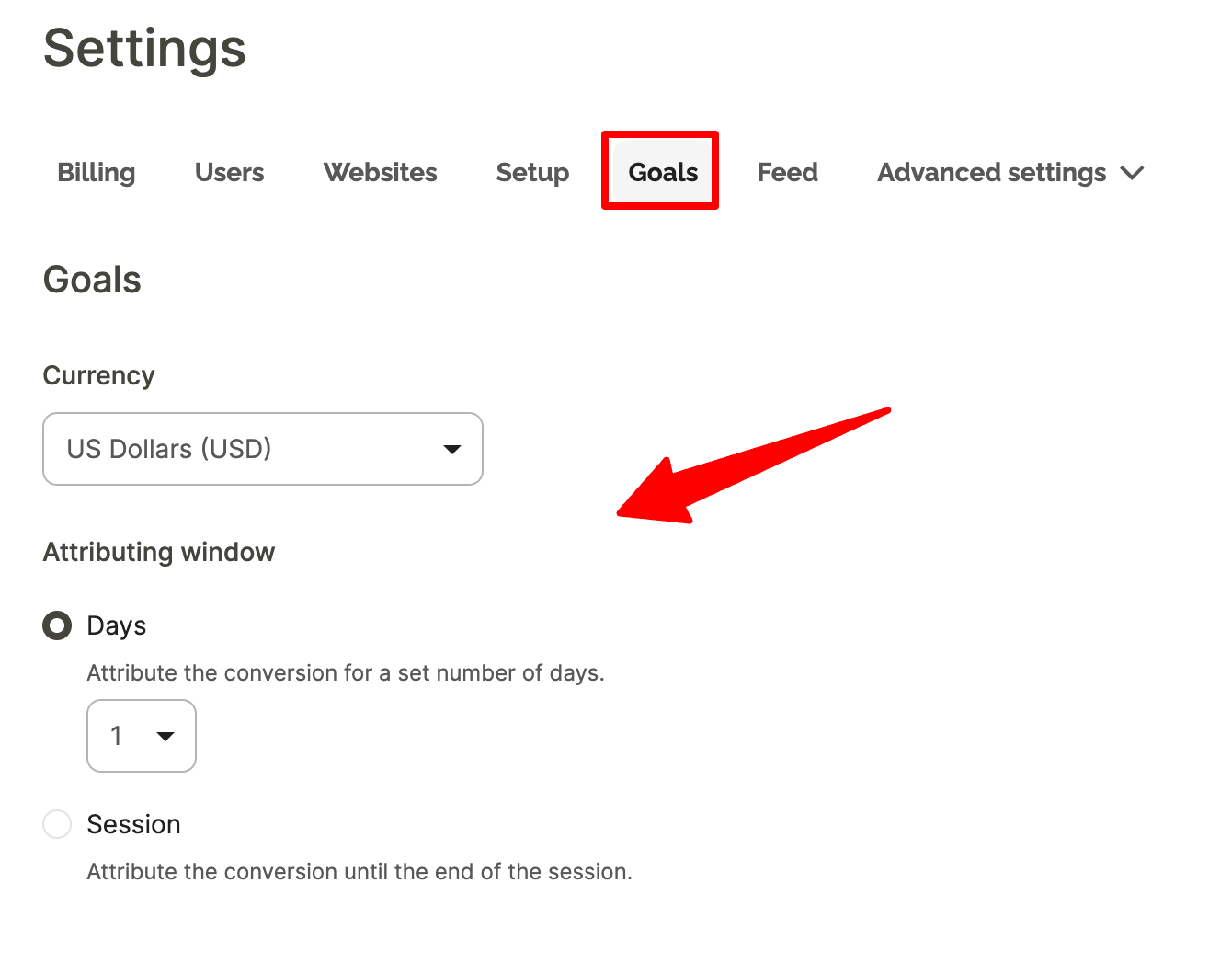

Set up goal tracking before you launch anything. A 24 or 48 hour revenue attribution window gives you more honest numbers

Brands running active multi-campaign strategies typically attribute up to 15% of revenue to Wisepops

Most dashboards in popup software make ROI look simple: attributed revenue goes up, campaigns are working.

But the reality is more complicated.

Attributed revenue is a useful signal, but it's not the same as incremental revenue, and understanding the difference is what separates teams that can defend their popup investment from those who can't.

This article covers what ROI looks like across real Wisepops customers, what each revenue metric actually means, and how to run a proper experiment that shows true incremental impact.

Get started:

See what your popup campaigns are actually earning

Set up goal tracking, run control group experiments, and measure true incremental revenue — free for 14 days, no credit card required.

How to make sure that your popup platform investment pays off:

What ROI can you expect from Wisepops campaigns?

The single biggest factor is whether discounting is part of your strategy. Popup campaigns with discounts convert at 7.45% on average — nearly double the 4.82% popup average.

That gap flows directly into attributed revenue figures. The brands in the table running welcome discounts and cart recovery offers will show materially higher attributed revenue than those relying on product recommendations or content alone.

Here's what the data shows across specific Wisepops customers running active, multi-campaign strategies:

Brand

Industry

Period

Attributed revenue

% of total revenue

ROI

Three things that significantly affect these numbers:

Discounting. Brands using welcome popups with discounts and cart recovery offers will show higher attributed revenue than those relying on product recommendation popups or informational campaigns, because discounts directly trigger trackable purchases.

Attribution window. OddBalls used a 24-hour window. Others configure longer windows. A longer window captures more conversions but also picks up purchases that were already in progress before the campaign interaction.

Goal tracking setup. Attribution only starts from the moment goal tracking is correctly configured and assigned to each campaign. Events prior to setup will not be attributed — there is no retroactive tracking.

These are best-in-class results from brands running and optimizing multiple campaign types simultaneously.

For brands newer to the platform or running fewer campaigns, around 15% attributed revenue is a more realistic starting point.

Examples of the most effective popup campaigns for maximum ROI

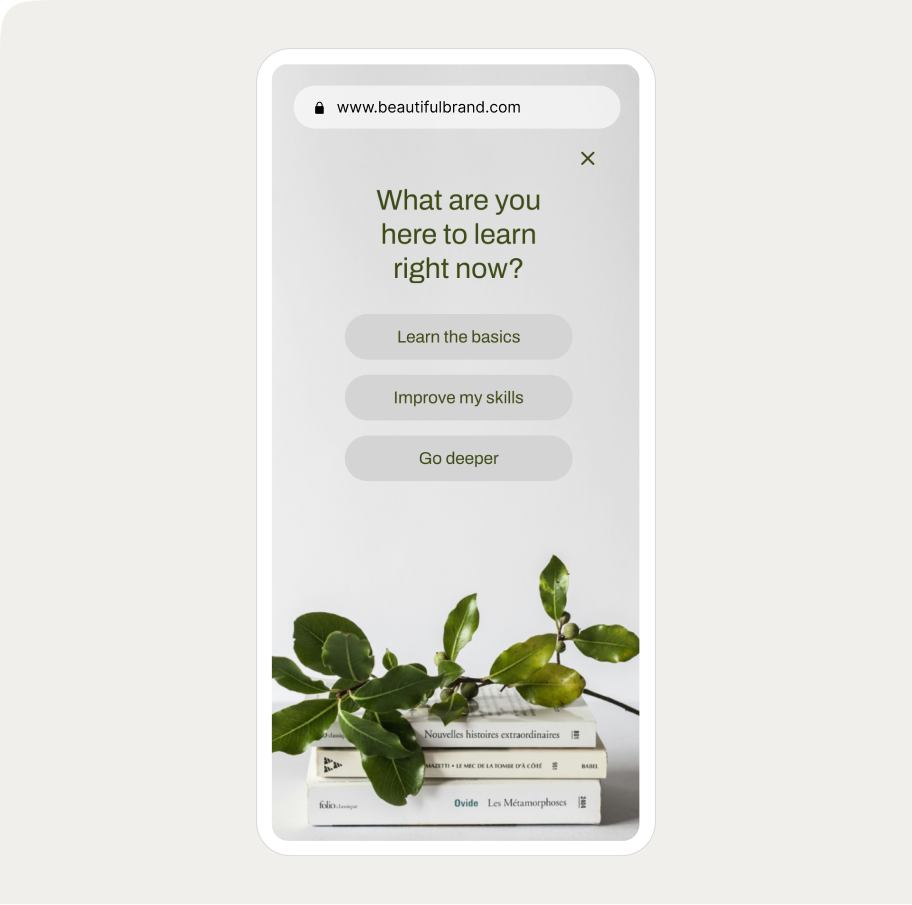

Survey branching popup

Ask visitors what they want to learn or shop for and route them to tailored offers.

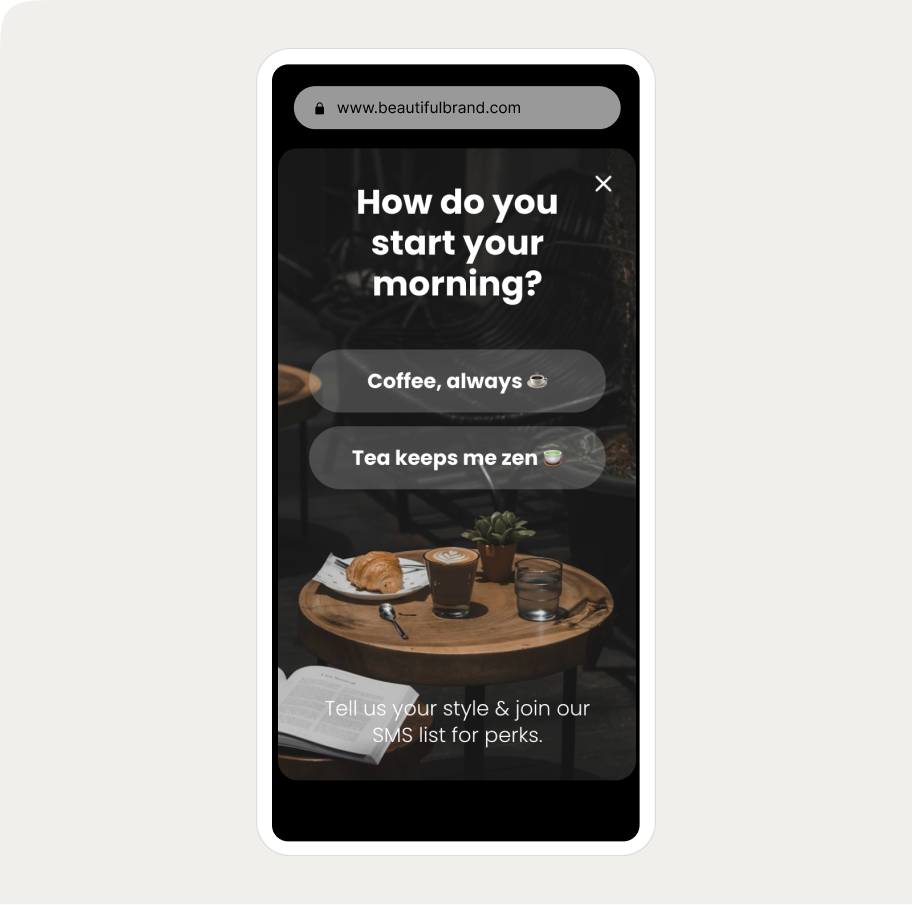

Ice breaker SMS signup popup

A popup that learns what visitors want to recommend the right products.

Email & SMS capture

Turn new visitors into subscribers and collect their emails + phone numbers

AI-powered cart recovery

Predict cart abandonment before it happens with AI to maximize recovery

NPS survey

Get more survey results with an engaging and quick NPS survey

Branching education popup

Ask visitors what they want to learn or shop for and route them to tailored offers or content.

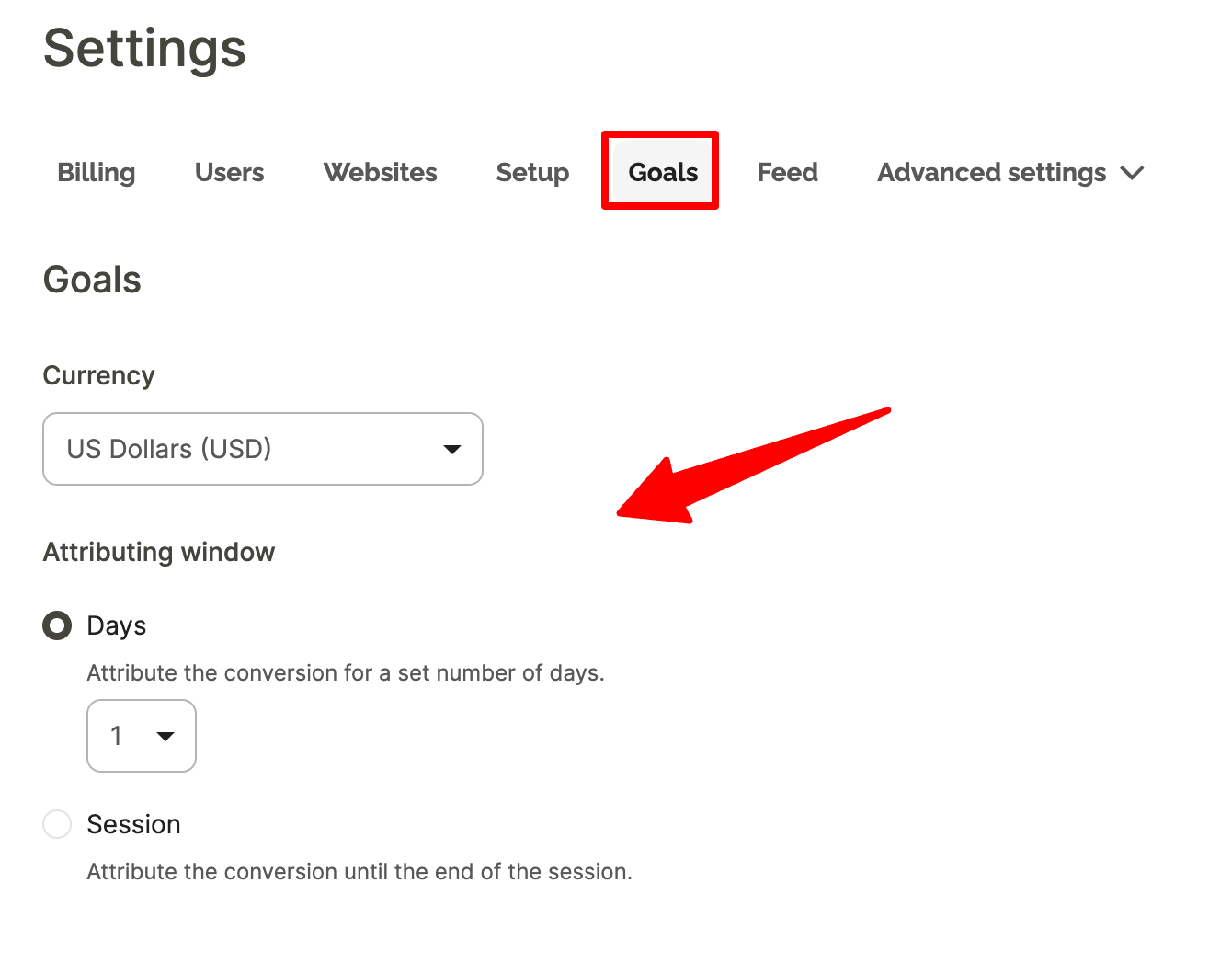

How to measure popup ROI

Set up goal tracking before you launch anything

Attribution only starts from the moment goal tracking is configured and assigned to each campaign. If you haven't done this yet, here's how to configure it before going any further.

How long should your attribution window be?

The default in Wisepops is 5 days. We recommend setting it to 2 days instead.

Here's why: a 5-day window will attribute purchases to a campaign even when the visitor had already decided to buy before seeing it — for example, a returning customer who clicks a popup on Monday and completes a repeat order on Friday.

A 2-day window is long enough to capture genuine influence (most purchase decisions happen within 48 hours of a product interaction) while reducing the chance of crediting campaigns for conversions they didn't really drive. It gives you a more defensible number when you're reporting results internally.

Understanding Wisepops revenue metrics

Metric

Definition

Where you see it in Wisepops

Attributed revenue

Revenue from visitors who clicked a tracked CTA and then completed a goal within the attribution window

Campaign dashboard

Total revenue

All revenue from visitors who were exposed to a campaign — whether they clicked or not

Experiments

Revenue per visitor

Total revenue generated per visitor across all revenue-associated goals

Experiments

Incremental revenue

Difference in total revenue per visitor between the campaign group and a control group that saw nothing

Calculated from results of A/B tests

From popup A/B testing to revenue measurement

If you're new to popup A/B testing, this guide covers the full setup process — what to test, how to create variants, and how to read basic results.

Let's focus on measuring actual revenue impact.

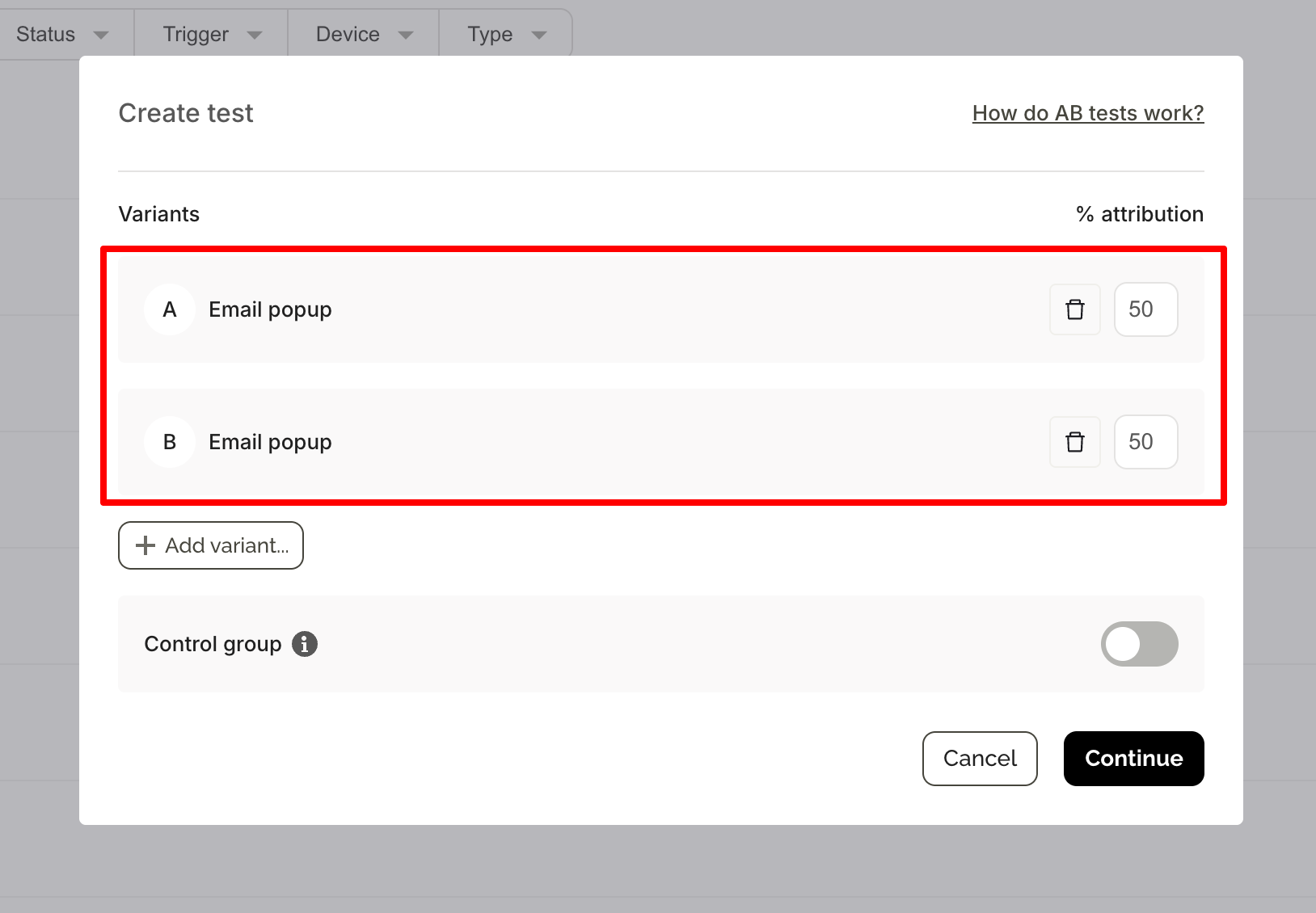

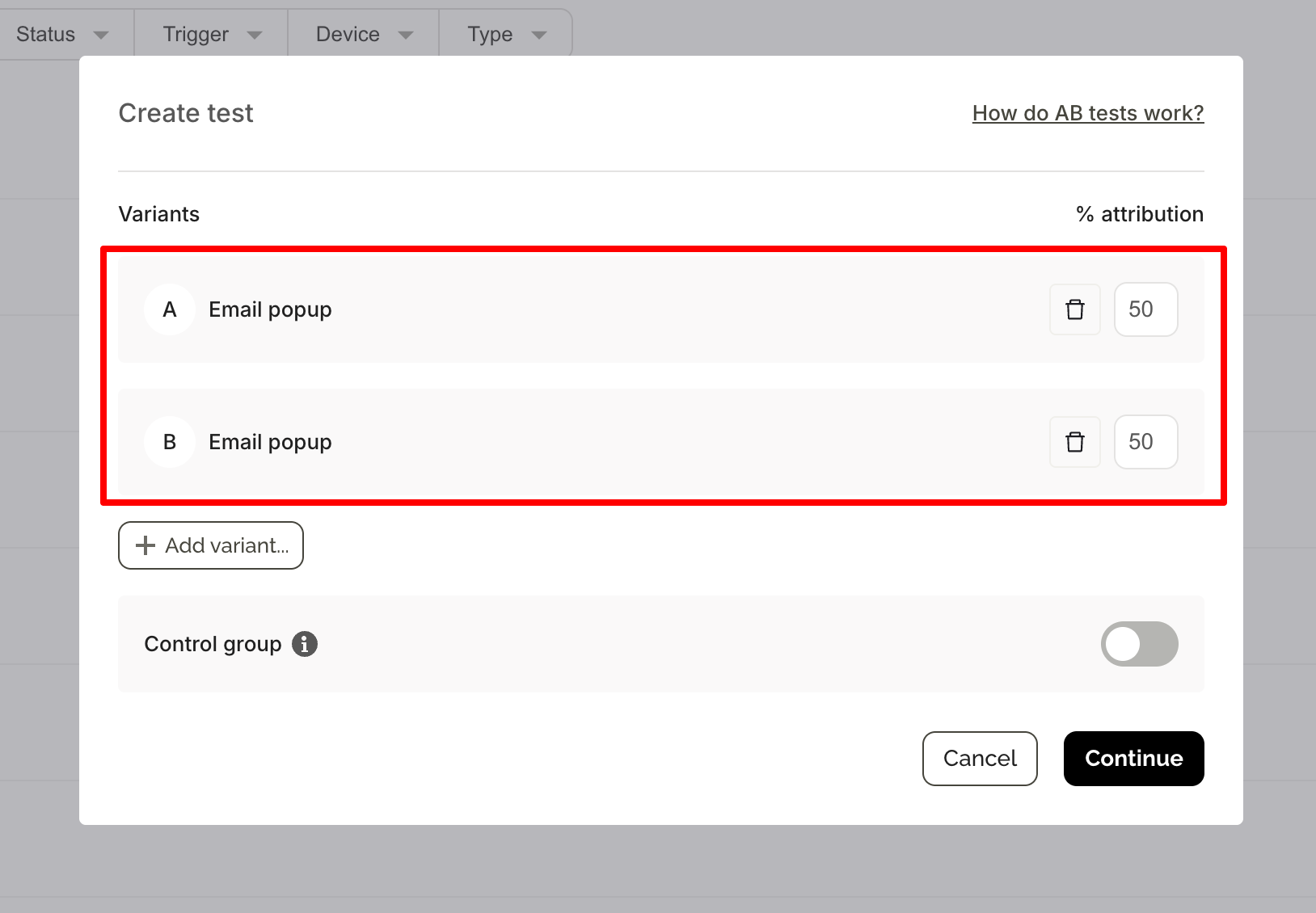

Standard A/B testing

It compares two campaign variants against each other using click-based attributed revenue. It tells you which version performs better. What it can't tell you is whether either version is adding revenue compared to showing nothing at all — that requires a control group.

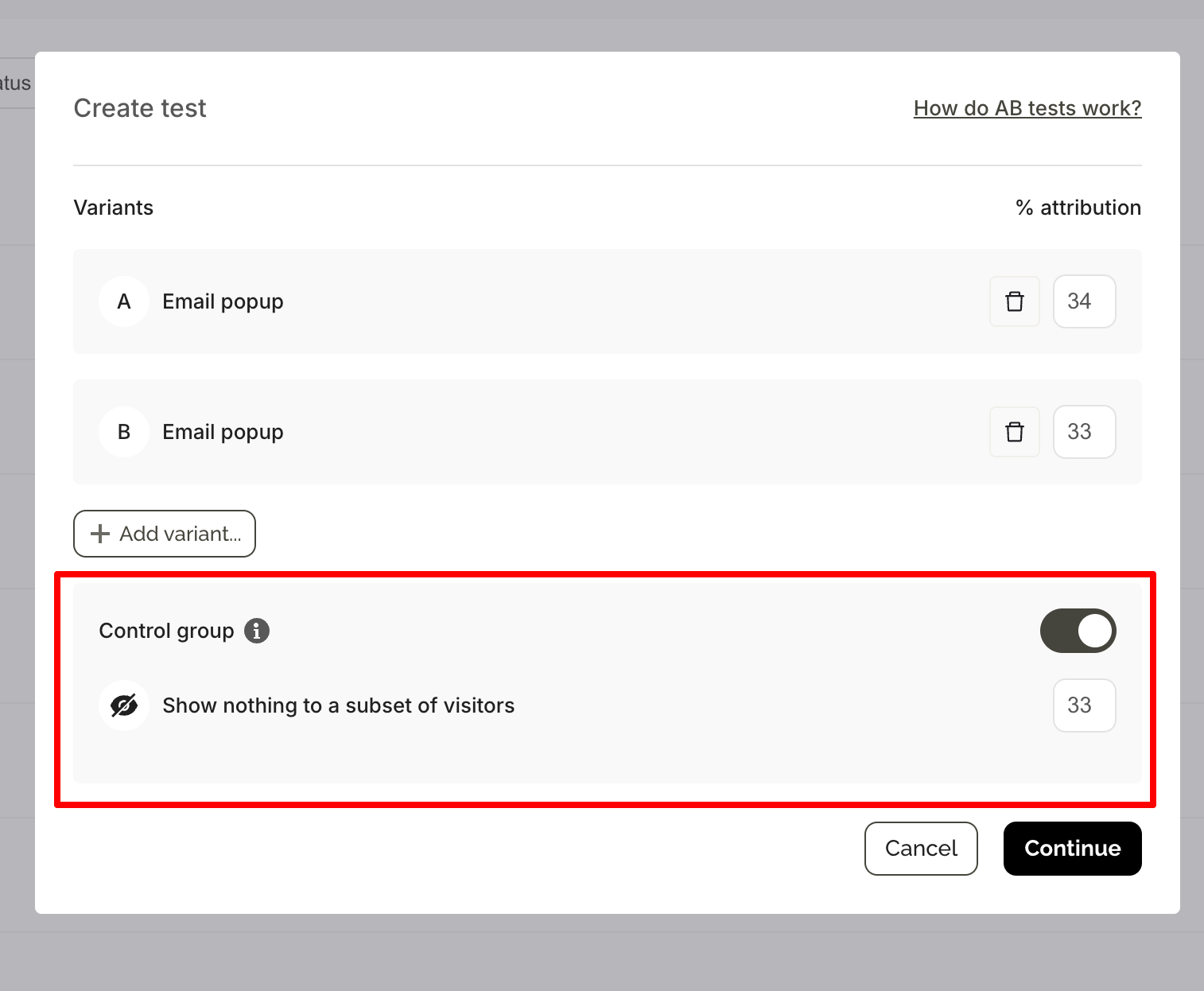

Popup A/B testing with a control group

With a control group, a share of visitors is excluded from seeing any campaign. Wisepops tracks the full behavior of both groups — not just clicks, but sessions, page views, and total revenue across the entire visit.

The difference in revenue per visitor between the two groups is your incremental revenue: what the campaign caused, not just what it correlated with.

How to set up a control group experiment for popups

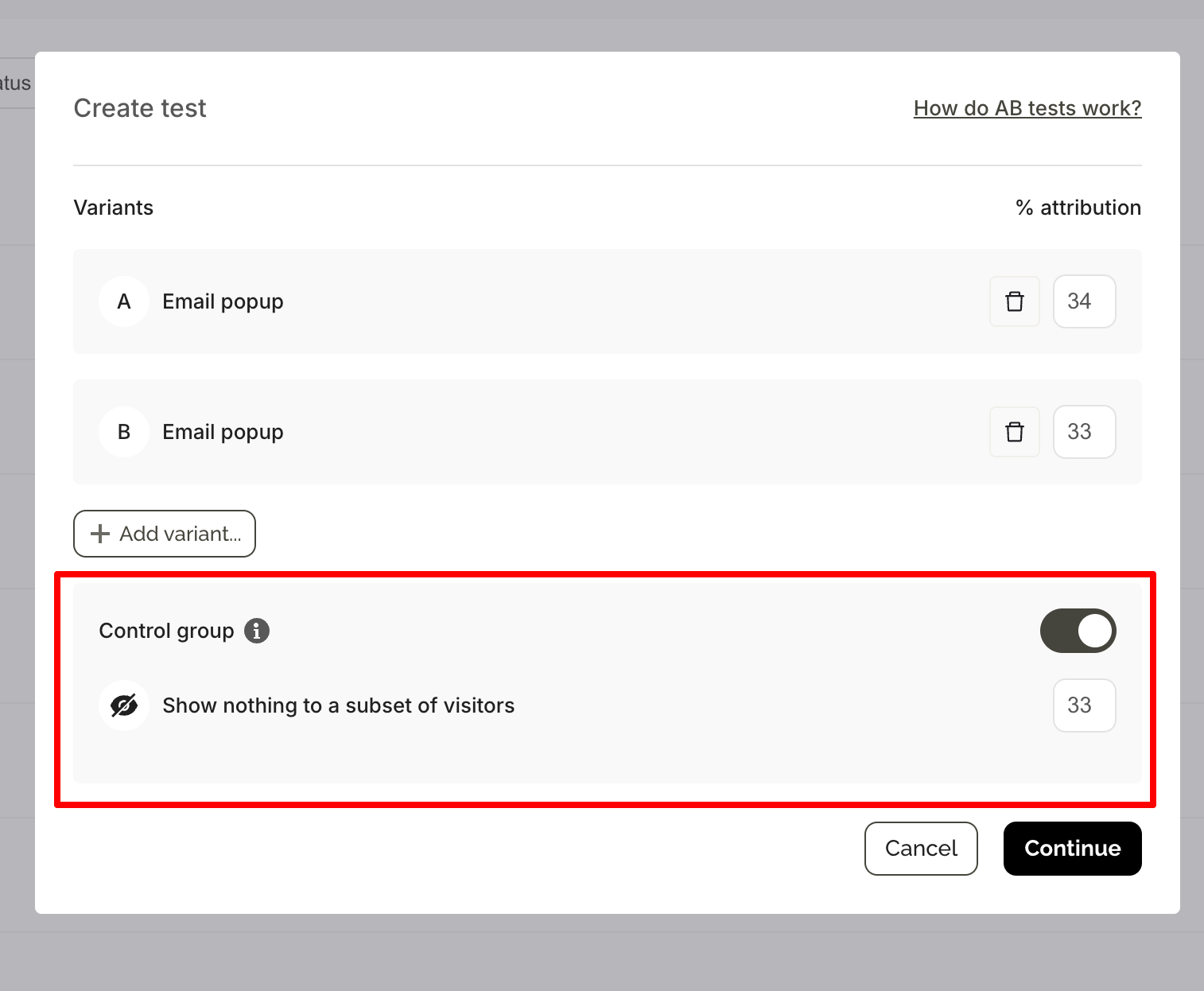

When creating an A/B test, toggle on the control group option to set aside a share of visitors who will see nothing.

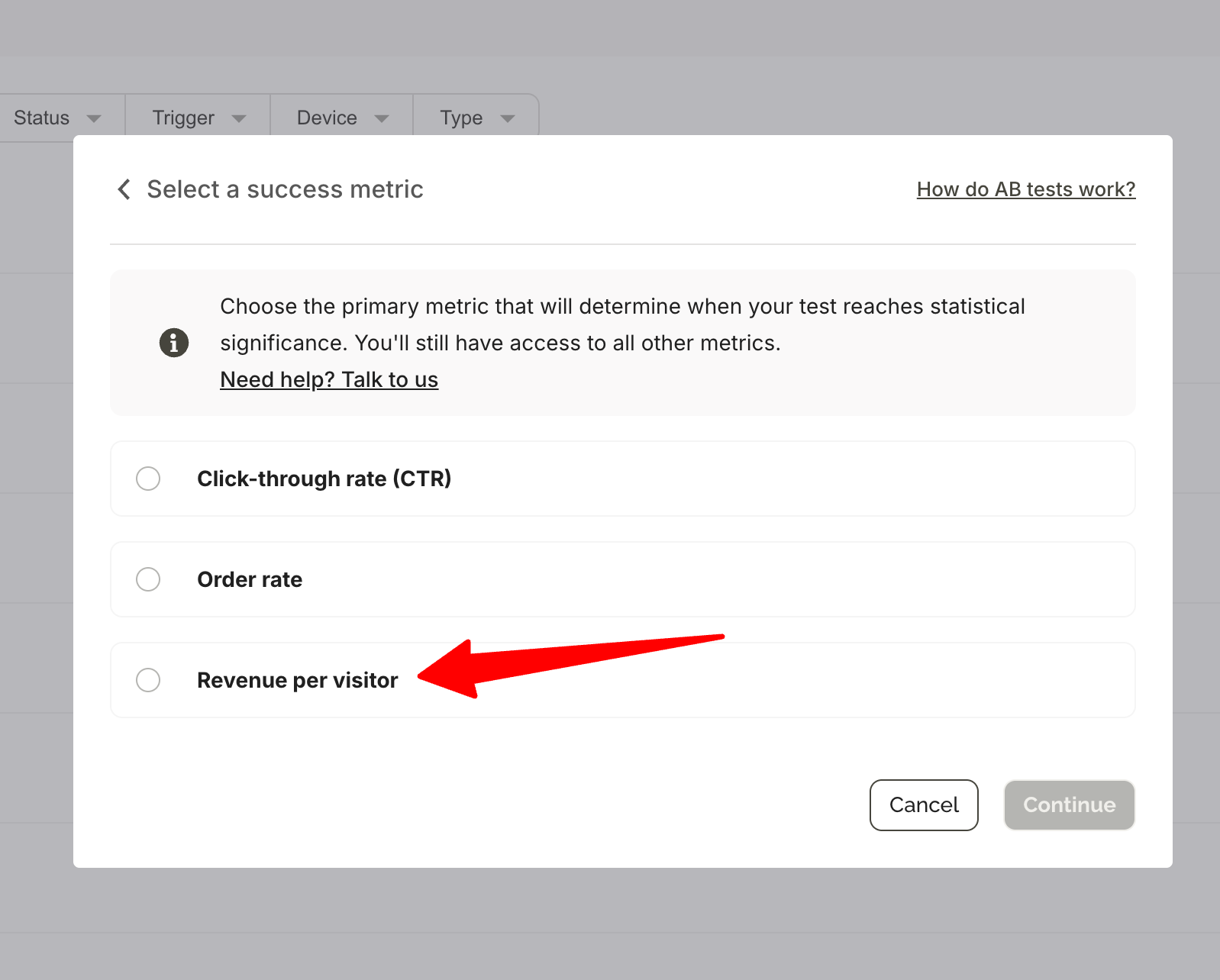

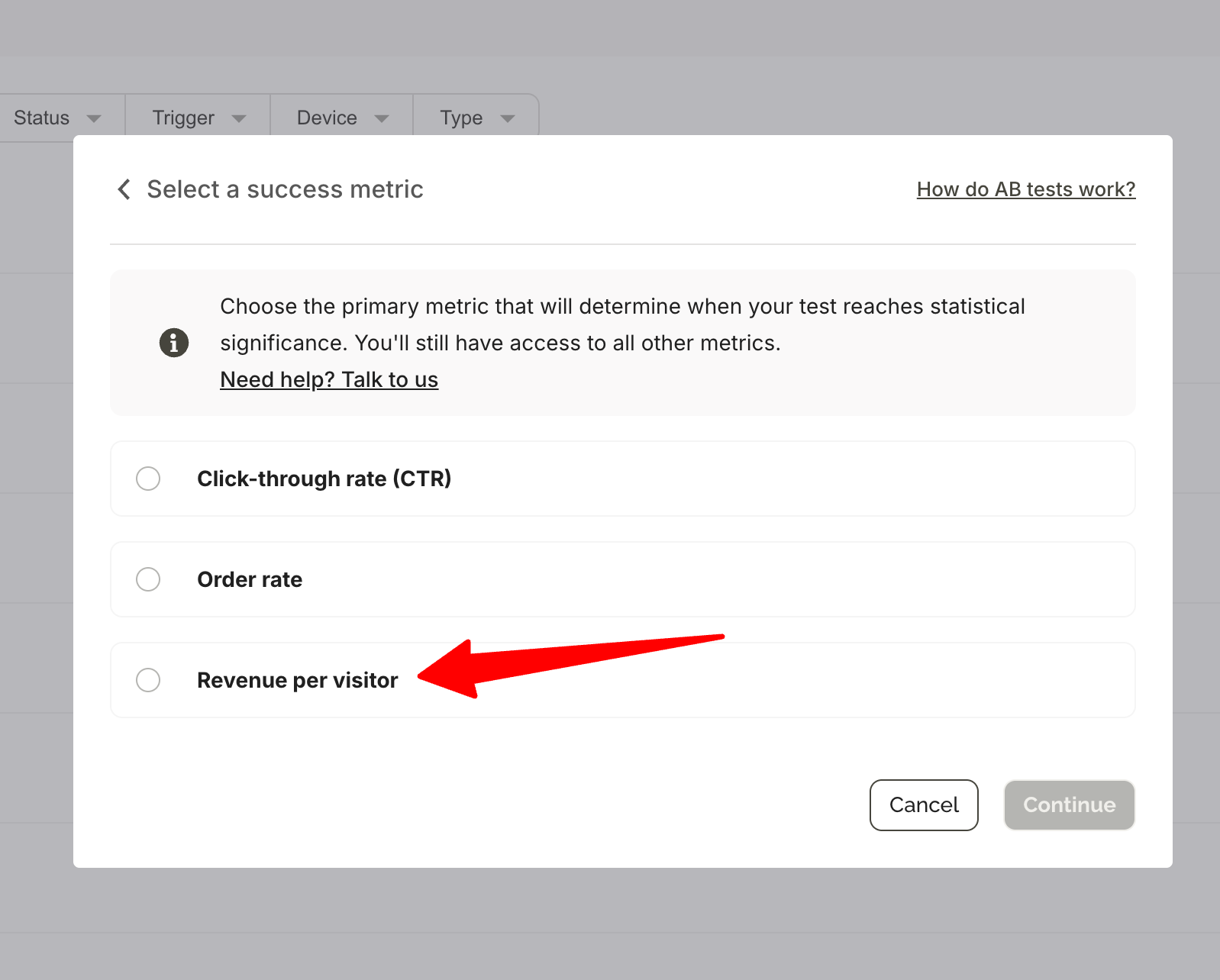

The default split when a control group is enabled is 34% / 33% / 33% (Variant A / Variant B / Control), though this can be adjusted. Select Revenue per visitor as your success metric.

One important detail: the control group follows the same targeting and triggering rules as your campaign. If your popup targets exit-intent visitors from paid traffic, the control group will also be drawn from that same audience — making the comparison fair.

The experiment dashboard becomes accessible 24 hours after launch and updates daily. You'll receive an automated email when the experiment reaches statistical significance at 95% confidence.

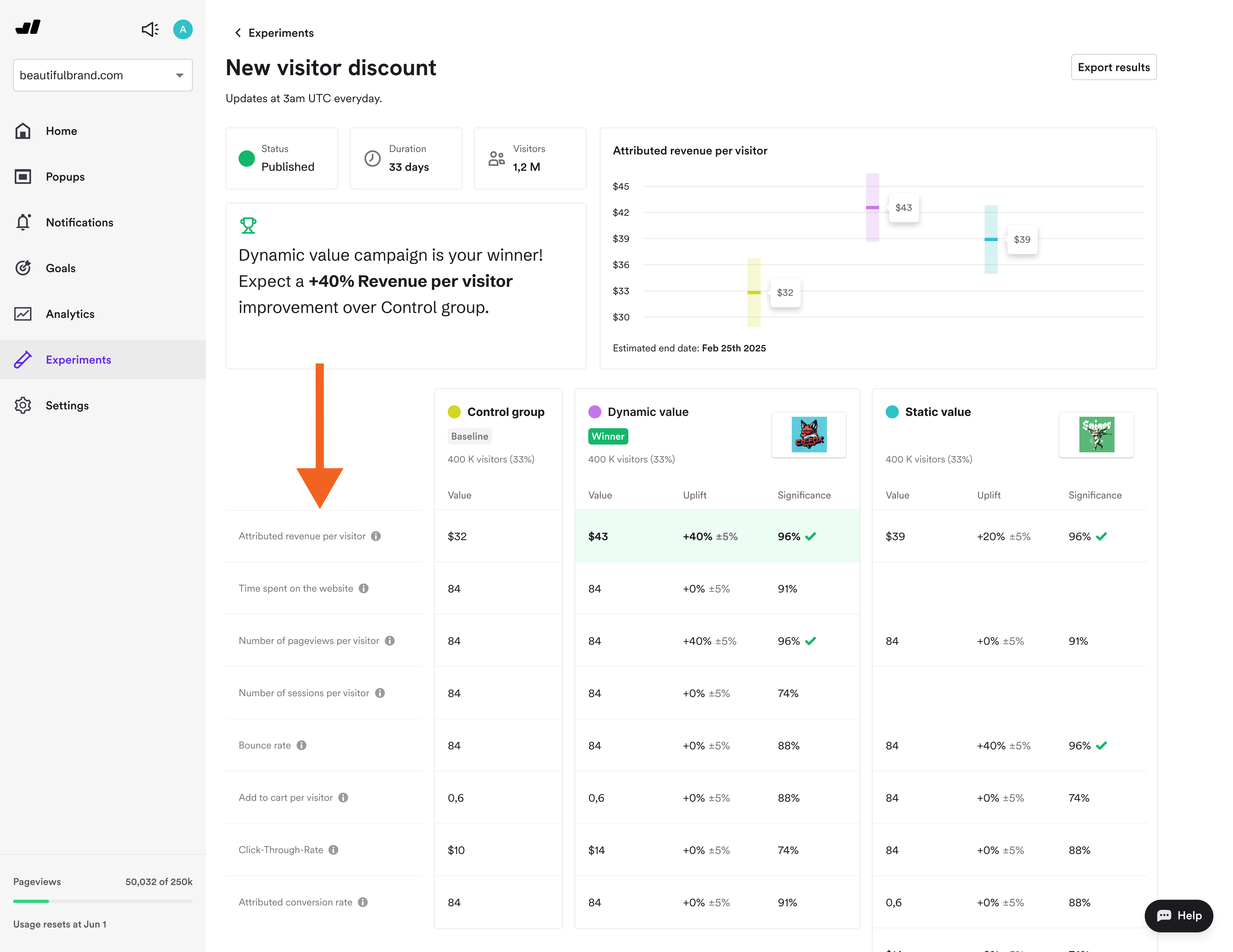

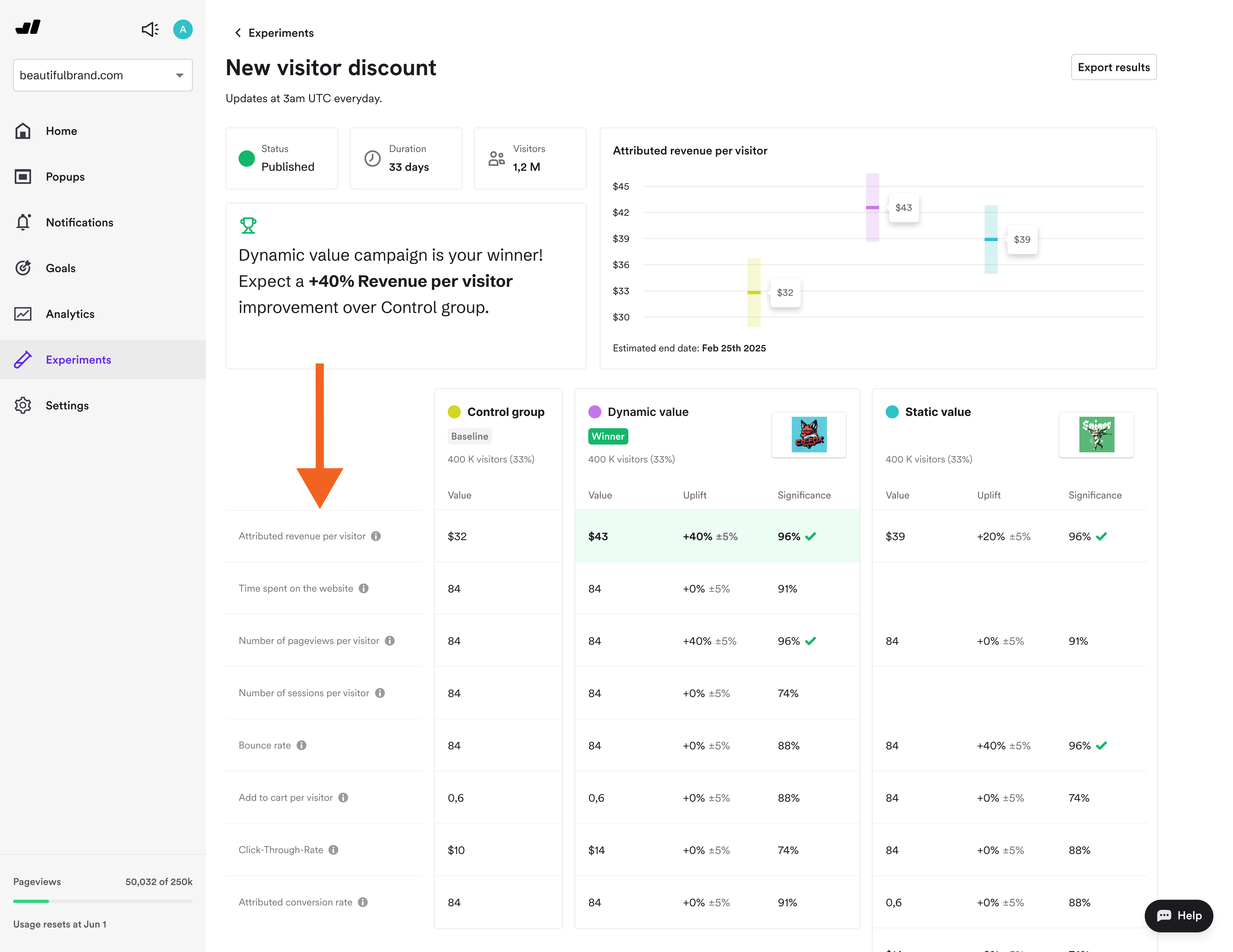

Reading the results of popup A/B tests with control group

The control group is the baseline. All uplift is calculated relative to it.

A +15% uplift in revenue per visitor with a ±5% confidence interval means Wisepops is 95% confident the true uplift falls between +10% and +20%. Wait for the green significance check before making any decisions — results before that point will shift as more visitors accumulate.

If the uplift is small or not statistically significant, the campaign may be capturing revenue that would have happened anyway rather than creating new revenue.

With Detailed Results enabled, you can see what's driving (or not driving) the lift:

Metric

What it tells you

Revenue per visitor

Whether the campaign group spends more in total

Order rate

Whether the campaign drives more orders

Average order value

Whether the campaign increases basket size (Shopify only)

Sessions per visitor

Whether the campaign influences return visits

Bounce rate

Whether the campaign helps or hurts early engagement

All metrics reflect the full visitor journey, including activity before the campaign was displayed.

What to A/B test with revenue per visitor in your popups

Does this popup add revenue or just capture it?

Run any existing lead capture or cart recovery campaign against a control group.

If revenue per visitor is materially higher in the exposed group and statistically significant, the campaign is creating genuine lift. If the gap is small or not significant, you're likely attributing purchases that would have happened anyway.

Do product recommendations increase order value?

Add a product recommendation step after a discount code reveal for one variant, and skip it in the other.

Compare average order value and revenue per visitor. If the recommendation variant generates more revenue per visitor, the step is justified. If not, removing it won't cost you conversions.

Which trigger generates more incremental revenue?

Test exit-intent versus on-landing display of the same campaign, each measured against the control group.

Note: if targeting or triggering rules differ between variants, visitor distribution may not match your intended split — which can skew results. Keep all settings identical except the trigger itself for a clean comparison. The trigger with the larger revenue per visitor uplift is the right one, regardless of which has a higher CTR.

FAQ

Results vary significantly by industry, strategy, and campaign maturity. Best-in-class Wisepops customers running active multi-campaign strategies have attributed 20–24% of total revenue to Wisepops campaigns, with ROI multiples reaching 200x in some cases.

A more realistic starting point for brands actively managing campaigns is around 15% attributed revenue. These figures come from customers using Wisepops across multiple campaign types, not popups in isolation.

In the Experiments Platform's Detailed Results, revenue per visitor is the total revenue generated per visitor across all revenue-associated goals. As a success metric when setting up an experiment, it's defined as the average amount of revenue per visitor. In both cases, it's used to compare spending behavior between visitors who saw a campaign and a control group who saw nothing.

Attributed revenue counts purchases from visitors who clicked a campaign CTA within the attribution window — it shows correlation. Incremental revenue is the difference in total revenue per visitor between an exposed group and a control group that saw nothing — it shows causation. Basic A/B testing uses attributed revenue. Control group experiments measure incremental revenue.

Set up a control group experiment. A share of visitors sees nothing; the rest see your campaign. Compare total revenue per visitor between both groups. The difference — once statistically significant at 95% confidence — is the incremental revenue the campaign drove beyond what visitors would have spent without it.

The default in Wisepops is 5 days. We recommend setting it to 2 days. This is long enough to capture most purchase decisions (which typically happen within 48 hours of engagement) while reducing the chance of attributing purchases to campaigns that didn't actually influence the decision.

Get started

in minutes

Start converting more visitors today.

Get started in minutes and see results right after.